A Crypto Exchange with 100k users

Overview

At the early stage of the blockchain and crypto trend, OpsSpark had the pleasure of working with a large Singaporean cryptocurrency exchange as our first client. OpsSpark team has helped the customer deploy the whole system with guaranteed dynamic scaling and security. The customer system consists of a trading platform, payment gateway, KYC portal, blockchain nodes, and more.

Challenges

- The client's infrastructure consists of more than 50 hosted servers to deploy the following systems: a trading platform, payment gateway, KYC portal, blockchain nodes, and more.

- The platform's user base was dramatically increasing as a result of industry trends.

- The client wanted to move their whole system to Google Cloud for better performance, easier management, as well as advanced, continuous integration and deployment.

- The infrastructure needs to be able to scale up as a response to increasing traffic and requests.

- At that time, blockchain technology was still at an early stage, so the system needs to be frequently updated to adapt to the ever-changing landscape.

- The system needs to be managed efficiently and updated regularly without any critical operational error and system failure, while also continuously monitored to ensure its web services achieve 5 mines uptime.

Solution

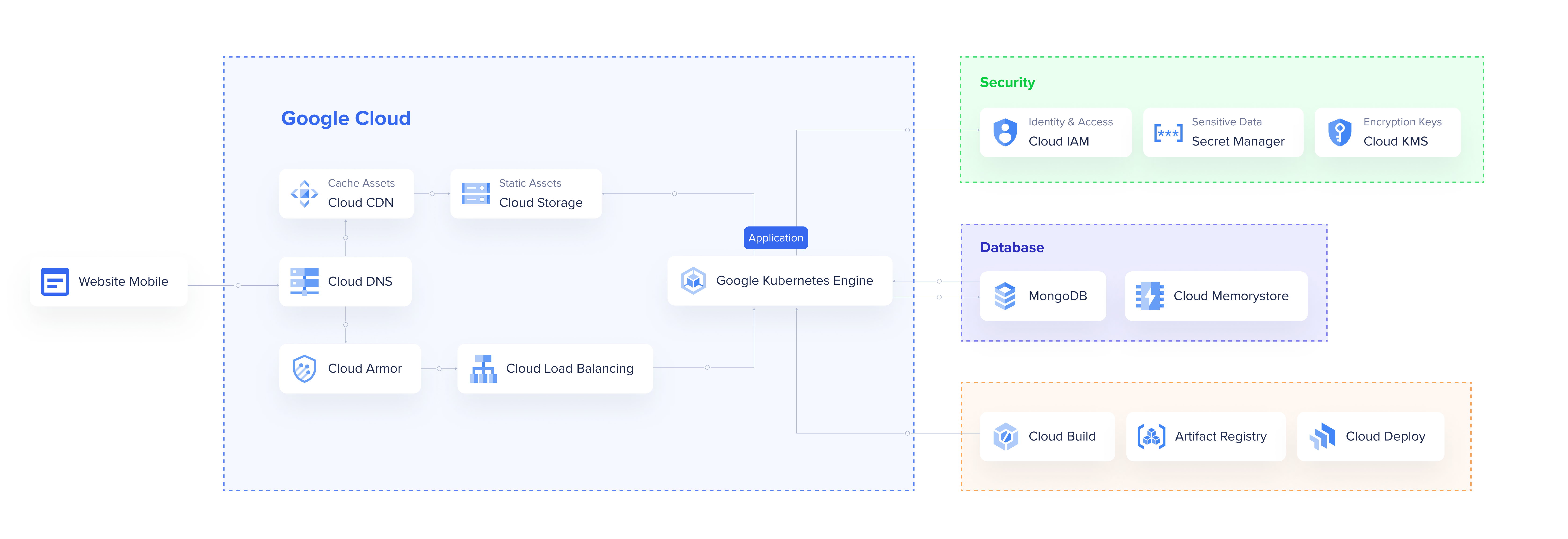

DevOps experts team at OpsSpark designed the architecture to meet all the customer’s infrastructure requirements.

We applied development (/Dev/) and operations (/Ops/) mindsets to significantly accelerate the delivery of the exchange's features, mitigate bugs, and shorten the improvement release life cycle by building CI/CD, testing integration, and applying system security.

CI/CD pipeline implementation

Using Gitlab as a source code repository and Teamcity as a CI/CD automation tool, our DevOps engineers designed a CI/CD pipeline to accelerate the processes of developing, testing and releasing the updates and fixing bugs on the customer’s web applications, comprised of the stacks: Nginx, Apache Tomcat + Java, NodeJS, and WordPress.

The OpsSpark team applied Docker to containerize the web applications, shipped them into GCP Optimized OS, then used GCP Container Registry to manage the containers. For configuration management and application deployment, OpsSpark implemented Ansible.

With CI/CD pipeline implementation, OpsSpark eliminated the differences between development, testing, and staging with the production environment, and automated integration & performance testing.

Traffic handling

To achieve proper load balancing, network traffic distribution, and HTTP caching across the customer’s IT infrastructure, our DevOps engineers used the following tools:

- GCP Load Balancer – to distribute the requests across multiple servers in different zones while ensuring high availability.

- GCP Instance Group - to extend the number of servers to handle traffic at a high load

- GCP SQL - to make sure we have a reliable, fully managed, auto backup relational database service for MySQL

Log management, monitoring and alerts

To monitor disk usage, RAM, and CPU consumption, our DevOps engineers set up and applied Prometheus and Grafana.

For uptime monitoring, the OpsSpark team used a set of Python Monitoring Scripts to monitor web services availability and send important alerts to a Telegram channel.

For convenient web application log management, the OpsSpark team applied the ELK stack and Logstash to parse web application logs, sending them to Elasticsearch where the logs were collected and filtered. Kibana and Grafana allowed the data to be stored in Elasticsearch for building data visualizations and deriving analytics.

Security

OpsSpark used Google Cloud Armor to filter incoming traffic to protect the website against denial of service and web attacks. The team separated the testing, staging, and production environment using VPC Networking. The access to internal services was restricted using a Firewall, Subnet mask division, and VPN.

Achievement

- Upon implementing the solutions, the customer was able to manage their infrastructure securely, efficiently, and conveniently, with the high availability of the architecture.

- All components of the exchange were built to scale to infinite traffic and workload, which was distributed uniformly to servers in multiple regions across the world.

- For performance, the Exchange matching engine can reach 1 million orders per second.

- At peak traffic, the load balancing operations are run automatically without the need for rewriting and re-deploying the backend.